|

# Block 6 #Įach post has an associated comment thread, which we also want to collect for future possible analysis. # Block 5 #įor each post (or submission) in the iterator we defined in the previous block, get its ID and use it to get the full post data from PRAW, and append it to our Python dictionary we defined in Block 3. Additionally, PSAW doesn’t give us all the information we want per post, so we use it only to retrieve the post’s ID, and then use that ID in PRAW to get the remaining information. In this example I have limited it to 100 posts, but the Python keyword None may be used to go over all posts in the specified timeframe. We specify this set with the parameters we setup before: starting and ending timestamps, the specific subreddit, and the number of posts to retrieve. PSAW allows us to create an iterator that goes over a specific set of posts (also called submissions). Other metrics can be extracted - use this guide to find out exactly which you need. We are interested in storing the post’s ID, URL, title, score, number of comments, creation timestamp, and the actual body of the post (selftext). # Block 3 #ĭefine the Python dictionary on which each post will be stored (in memory, and later written to the previously defined CSV file path). # Block 2 #įor each subreddit in, start counting the extraction time for logging purposes, create the corresponding directory inside the current year’s directory, and define the path for the CSV file on which to store the corresponding posts that are to be extracted. These timestamps are provided as input to the PSAW request, specifying the window of time from which to retrieve data. Simply put, for each year between and, create a directory and define the beginning and ending timestamps (start of the current year until the start of the following year). Here is the full code, and then we will go over it, one block at a time. The main idea is very simple: for each year between and, create a directory, and for each subreddit in create a directory to store that subreddit’s posts during that year. import time def log_action(action): print(action) return Main algorithm This function can be easily altered to log to a file, instead of printing to the screen: that is why I encapsulated it into a function, instead of having the print directly built-in to the algorithm. This will be very insightful if we end up extracting lots and lots of data and want to make sure the program isn’t just stuck somewhere.

In our case, we will simply write a function that prints to the screen the action that was just taken by the algorithm, and how long it took to take that action.

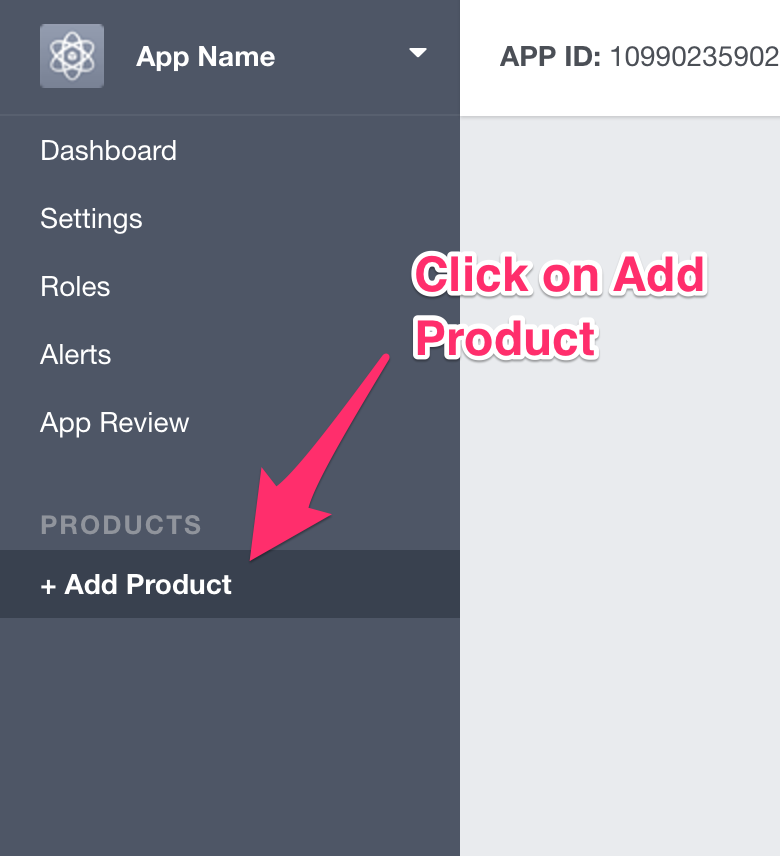

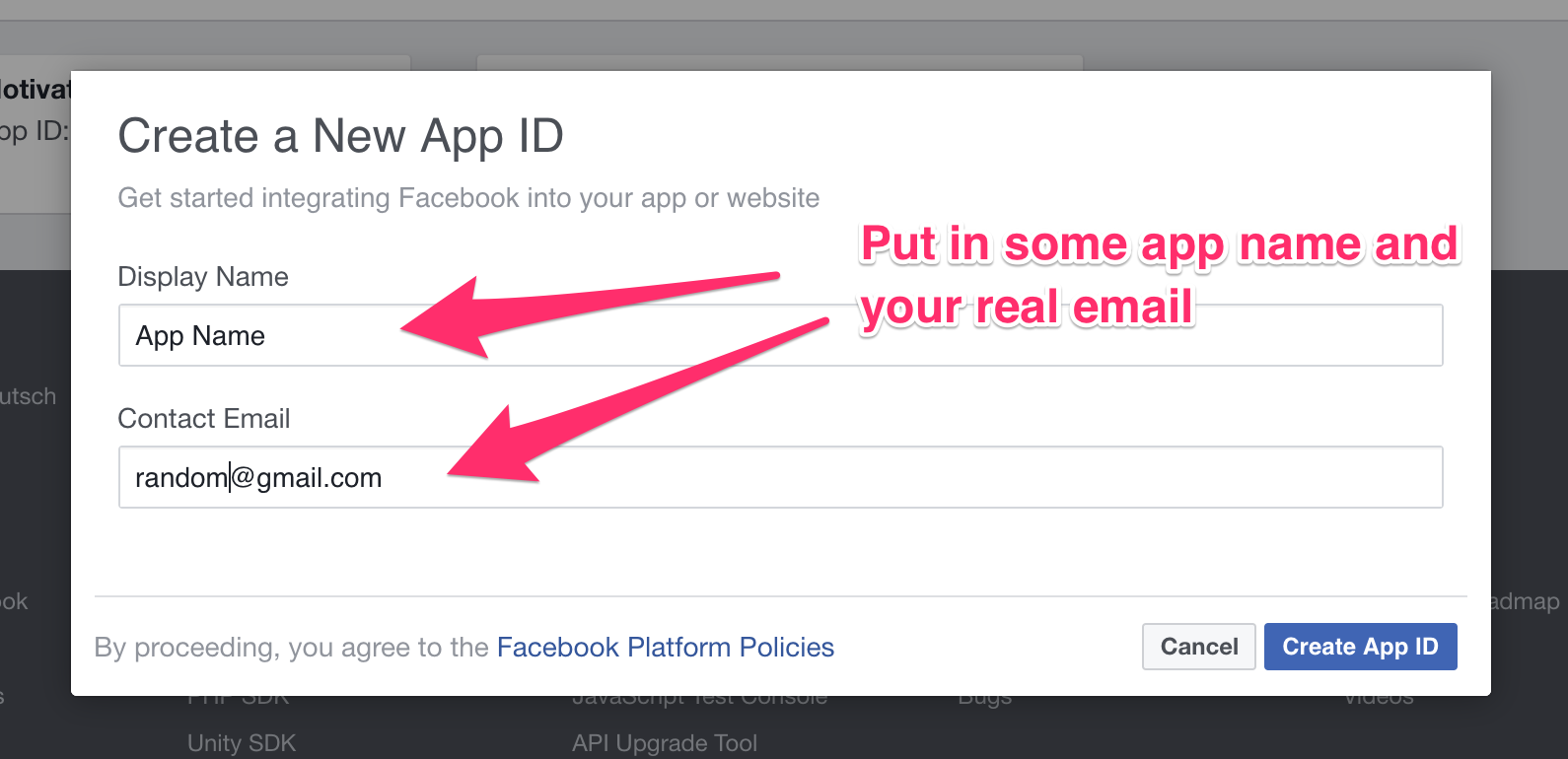

These are especially relevant when the process is long and you want to have an idea of how it is going, from time to time. Logging functions give you insights into the process that you are running. subreddits = start_year = 2020 end_year = 2021 # directory on which to store the data basecorpus = './my-dataset/' Logging functions Finally, we have to setup the directory on which to store the data. In this case, we will extract posts from the r/shortscarystories subreddit, posted between the start of 2020 and the end of 2021 (up to today). We also have to define the list of subreddits (it can be just one!) from which to extract posts, and the year-range, i.e., the start and end year from which posts will be retrieved. import praw from psaw import PushshiftAPI # to use PSAW api = PushshiftAPI() # to use PRAW reddit = praw.Reddit( client_id = "YOUR_CLIENT_ID_HERE", client_secret = "YOUR_CLIENT_SECRET_HERE", username = "YOUR_USERNAME_HERE", password = "YOUR_PASSWORD_HERE", user_agent = "my agent" ) You can follow this guide to quickly get your token and replace the relevant information in the code below, specifically, your client ID and secret, and your reddit username and password. However, in order to use PRAW (Reddit’s API), you need to set up your authorization token, so that Reddit knows your application. The use of PSAW is fairly straightforward. We will go over the necessary setup and logging functions, the main algorithm in full and block by block, and finally examples of the collected data and ideas for collection and exploration.

In this post, we will develop a tool in Python to collect publicly available Reddit posts from any (public) subreddit(s), including their comment thread, organised by year of publication. Some public subreddits can be deep wells of fun and interesting data, ready to be explored! However, it can be daunting to even think of how to collect that data, especially in large amounts. Reddit is a social media platform structured in sub-forums, or subreddits, each focused on a given topic.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed